Big Data is considered an integral part of the industry since it has become the need of the hour. The number of challenges has been increasing in both—data management and data analysis. In a way to overcome these, manufacturers have also been looking at new requirements that help in the support of the big data era. Big data tools are mostly used by organizations for harnessing their data and identifying new opportunities.

Despite the concept being around for many years, companies were complaint about using them. Such a turn in the businesses occurred as they have realized the importance of big data tools. The new advantages that big data tools bring to the table are efficacy and speed. Organizations have achieved the edge of competitiveness because of big data tools’ ability to work faster and gain maximum efficiency. These tools cost-effective as well.

Industrial big data analytics is mainly dependent on the following 5 methodologies:

- Industrial data ingestion: access and integrate to highly distributed data sources from various systems, devices, and applications.

- Industrial big data repository: cope with sampling biases and heterogeneity, and store different data formats and structures.

- Industrial data management of large-scale: organizes massive heterogeneous data and share large-scale data.

- Industrial data analytics: track data provenance, from data generation through data preparation.

- Industrial data governance ensures data trust, integrity, and security.

Listed below are the 15 most efficient, user-friendly big data tools that the organizations use on a daily basis:

1. Apache Hadoop

All data scientists are aware of the significance of Apache Hadoop. It is an open-source software framework that handles big data via the MapReduce programming model. Its primary strength is the Hadoop Distributed File System (HDFS). This can hold all kinds of data – videos, images, JSON, XML, and plain text on the same file system. The software is written in Java and provides cross-platform support. Hadoop is associated with over half of the Fortune 50 companies including Amazon Web Services, Hortonworks, IBM, Intel, Microsoft, Facebook, etc. The software is cost-free under the Apache License.

2. Xplenty

This is a big data tool to integrate, process, and prepare data for the cloud. Xplenty is a holistic toolkit for building data pipelines with low-code and no-code capabilities. Its graphic interface helps to bring out ETL, ELT, or a replication solution. It nullifies the need to invest in hardware, software, or related personnel. Customers are benefitted via email, phone calls, and online meetings. Companies using Xplenty include Targeted Victory, Xenon Ventures, Litmus, Fresh & Easy, and others. It has a subscription-based pricing model.

3. MongoDB

MongoDB is a NoSQL document-oriented big data tool. It is an open-source tool written in C, C++, and JavaScript. It supports a variety of operating systems including Windows Vista, OS X, Linux, Solaris, and FreeBSD. It is deemed the best for semi or unstructured data sets that change frequently. Some of its features include Aggregation, Adhoc-queries, Sharding, Indexing, and so on. Big names associated with MongoDB include Facebook, eBay, MetLife, Google, etc. The SMB and enterprise versions carry price tags, and pricing is available on request.

4. Cassandra

This open-source big data tool was first developed by Facebook as a NoSQL solution. It is built to handle vast volumes of data across several commodity servers. The Cassandra Query Language (CQL) is a simple interface to interact with the database. The high-performing Apache Cassandra is free of cost and registers linear scalability. It is linked to big names like Accenture, American Express, Facebook, Honeywell, Yahoo, and others.

5. Drill

Also a subsidiary of Apache, Drill is an open-source framework. This tool lets data scientists and developers work on interactive analyses of large scale datasets. It supports plenty of file systems and databases such as MongoDB, HDFS, Amazon S3, Google Cloud Storage, and others, which spells its versatility. It was designed to scale 10,000+ servers. Petabytes of data and millions of records can be processed by it in seconds. Unitedhealth Group, LPL Financial, JPMorgan Chase, and other companies are associated with Apache Drill. It provides a lifetime license of $89.

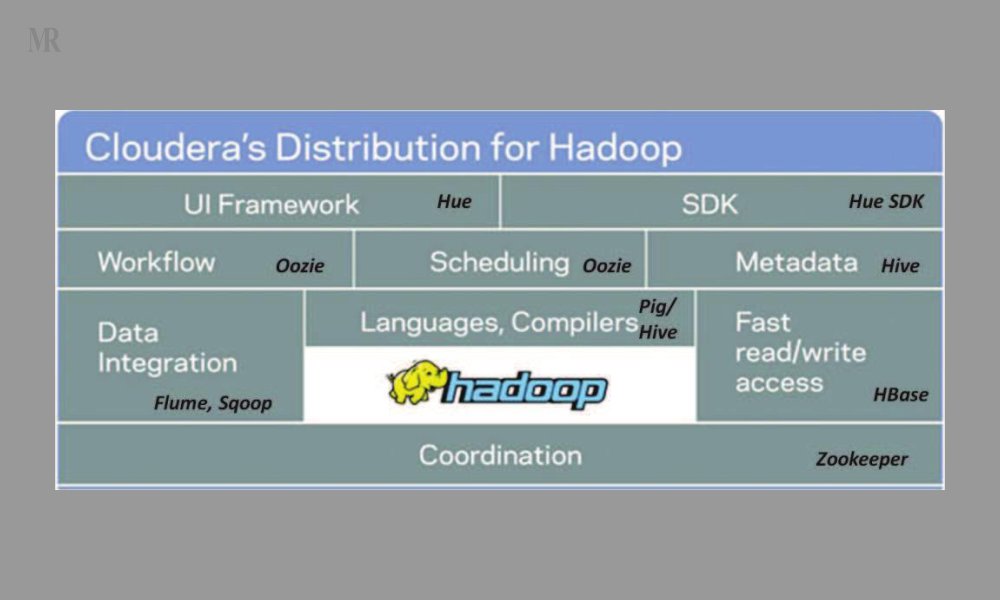

6. Cloudera Distribution for Hadoop (CDH)

CDH is an open-source tool that can operate on several Apache platforms like Apache Hadoop, Apache Spark, Apache Impala, and others. This big data tool allows one to collect, process, administer, manage, discover, model, and distribute unlimited data. It can be easily implemented and boasts of high security and governance. CDH is a free software version by Cloudera. However, the Hadoop cluster costs around $1000 – $2000 per terabyte on a per-node basis. Cloudera serves companies like QA Limited, Willis Towers Watson, Stanley Black & Decker Inc., etc.

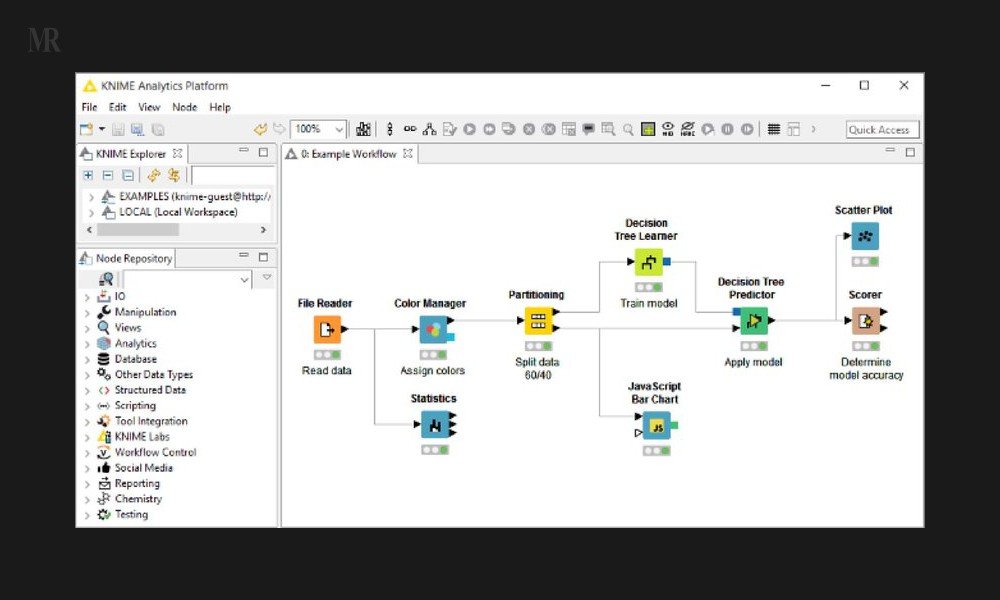

7 . Knime

Konstanz Information Miner or KNIME is an open-source big data tool. It is considered to be a good alternative to the Statistical Analysis System (SAS). It has a rich algorithm set and integrates well with other technologies and languages. The tool can be used for enterprise reporting, CRM, data mining, data analytics, text mining, and business intelligence. Windows, Linux, and OS X are supported. Some top companies which use Knime include Comcast, Johnson & Johnson, Canadian Tyre, and others. The Knime platform is free of cost, yet other commercial products are also offered on the Knime analytics platform.

8. Datawrapper

Datawrapper is known to generate simple, precise, and embeddable charts very quickly. It is an open-source platform that works well on any device. It has excellent customizable and export options. The response is quick and coding is not required for this big data tool. The major companies using Datawrapper are mostly newsrooms including The Times, Bloomberg, Fortune, Mother Jones, etc. The data software provides free service as well as customizable paid options.

9. Elasticsearch

Elasticsearch was developed by Java and released under the license of Apache. It is an open-source and cross-platform search engine based on Lucene. It is used as an integrated solution combined with Logstash, a data collection and log parsing engine, and Kibana, an analytics and visualization platform. The three products together are called Elastic Stack. A core function of Elasticsearch is to support data discovery apps with its super-fast search engine. A few high-profile names linked to Elasticsearch are Uber, Udemy, Shopify, Instacart, Robinhood, and others. With an initial free trial, it has standard, gold, platinum, and enterprise versions according to one’s preference.

10. Lumify

It is an open-source big data tool specializing in several features. Its functions include big data fusion, analytics, and visualization. Some impressive features are 2D and 3D graph visualizations, automatic layouts, integration with mapping systems, and real-time collaboration through a set of projects or workspaces. It has a team dedicated to full-time development. It supports the cloud-based environment as well as Amazon’s AWS. This tool is free of cost.

11. Talend

Talend comes under a free and open-source license. It consists of three integration products for data processing – open studio for big data, big data platform, and real-time big data platform. All these products have their own unique features. It handles multiple data sources and accelerates one’s work. It helps in a major way by providing for numerous connectors at a single location. The open studio for big data is free, while the other products offer subscription-based prices. A few of the companies utilizing this big data tool are Wells Fargo, Emergent BioSolutions, and Axtria – Ingenious Insights.

12. HPCC

HPCC was developed by LexisNexis Risk Solutions. Standing for High-Performance Computer Cluster, HPCC is a big data solution on a highly scalable supercomputing platform. It is also called Data Analytics Supercomputer (DAS). Being a good substitute for Hadoop and other platforms, it is based on a Thor architecture. This supports data parallelism, pipeline parallelism, and system parallelism. It is written in C++ and Enterprise Control Language (ECL) which is a data-centric programming language. HPCC is fast, powerful, and highly scalable. It serves companies like Aptiv, 3LOQ Labs, and Viacom and is free of cost.

13. Storm

Created under Apache, Storm is a cross-platform and fault-tolerant real-time computational framework. It has also been developed by Backtype and Twitter. Being free and open-source, it is written in Clojure and Java. The architecture is based on customized spouts and bolts to describe information sources. It involves multiple functions – real-time analytics, log processing, ETL (Extract-Transform-Load), and others. This big data tool does not charge anything. Some famous organizations using Apache Storm are Yahoo, Alibaba, and The Weather Channel.

14. Samoa

Also built under Apache, SAMOA stands for Scalable Advanced Massive Online Analysis. Big data stream mining and machine learning are processes undertaken by this open-source platform. One can create machine learning algorithms and run them on many Distributed Stream Processing Engines (DSPEs). It is composed of Write Once Run Anywhere (WORA) architecture. Apache Samoa serves Infiflex Technologies PVT LTD and Peace Corps of the US government. The tool is free of cost.

15. Rapidminer

Rapidminer is a cross-platform big data tool. It provides an integrated environment for data science, machine learning, and predictive analytics. The free edition involves one logical processor and up to 10,000 data rows. Small, medium, and large proprietary editions are also available. It makes front-line data science tools and algorithms convenient. Customer service and technical support are superb. Organizations including Hitachi, BMW, Samsung, and Airbus use Rapidminer. The price starts from $2.500.

Also Read, History of Google – From 1996 to 2020